Building a Real-Time AI Avatar Assistant with OpenAI Realtime + HeyGen

Debakshi B.

Nov 27, 2025

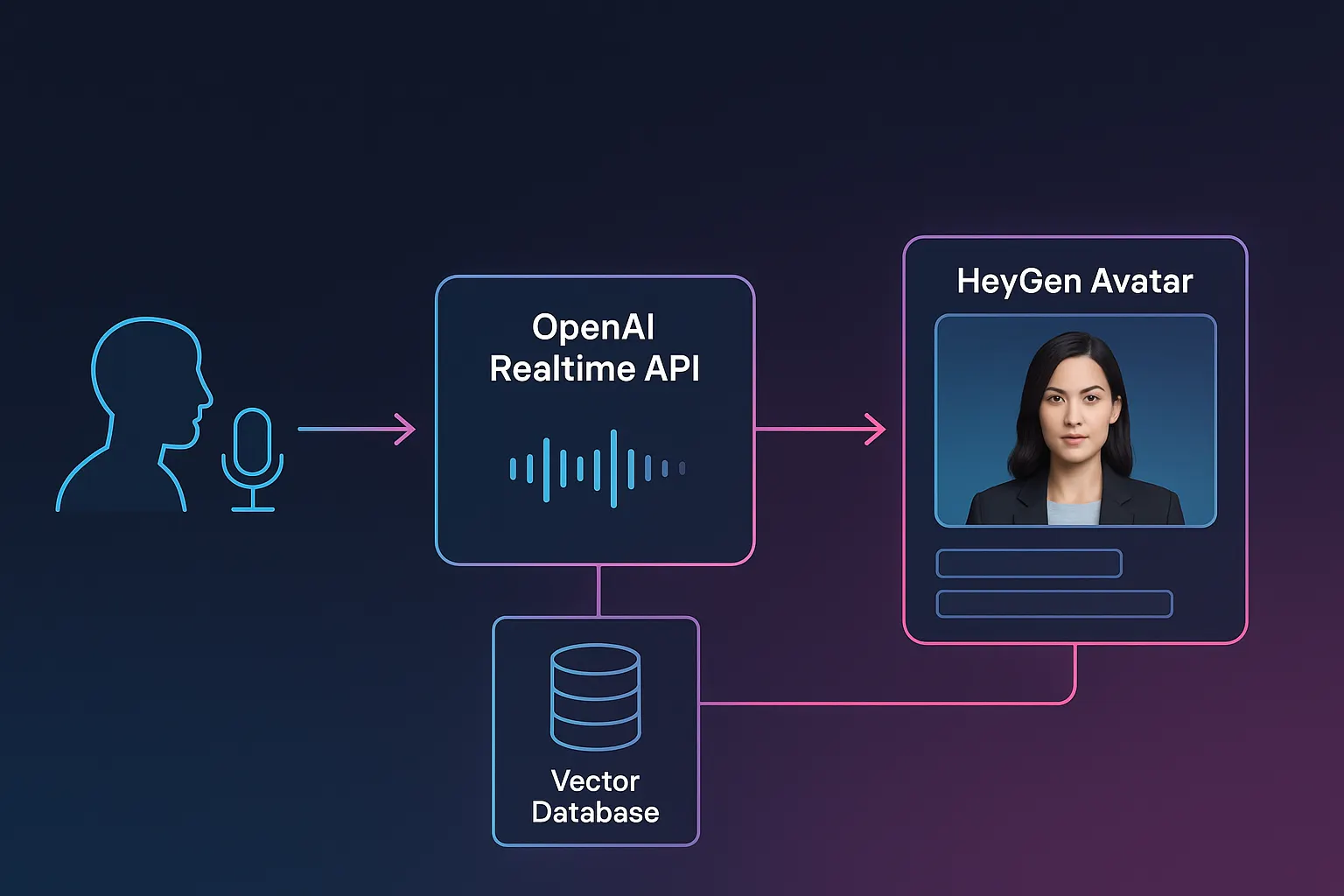

Real-time voice interfaces once required a patchwork of APIs, sockets, and custom audio pipelines. Today, we can build fully interactive, streaming multimodal experiences — complete with live transcription, dynamic responses, and photorealistic avatars — using only a few well-structured components.

In this post, we’ll walk through how to build an end-to-end conversational system that uses:

1. OpenAI’s Realtime API for two-way speech interaction

2. HeyGen’s Streaming Avatar for natural, synchronized video responses

3. A vector database (Pinecone) for retrieval-augmented answers

4. A small backend to broker authentication and embeddings

5. A browser client for audio capture, VAD, and the live avatar

We’ll focus on the architecture, code flow, and practical lessons from building a production-ready experience.

What Can We Make With This?

Customer Support Digital Agent

- Avatar explains troubleshooting steps

- Knowledge base retrieves product policies

- Realtime speech lowers user friction

Medical or Enterprise Training Simulators

- Avatars act as trainers

- Realtime voice makes scenarios feel human

- Vector search retrieves curriculum content

AI Concierge Experiences

- Hotel lobby kiosks

- Retail store assistants

- Airport help desks

Hands-free Productivity Tools

- Developers speak aloud to a real-time coding assistant

- Avatars provide explanations, summaries, or walkthroughs

Why Real-Time Matters

Traditional chat UIs wait for turn-based messages. Real-time agents behave differently: they listen continuously, transcribe speech as you talk, and respond in streaming fragments. It’s a completely different UX — and much more natural.

When we add a talking avatar, the goal shifts again: responses need to arrive fast, appear human, and stay adaptable to context. That requires a pipeline where:

- 1. User audio is streamed →

- 2. Speech detection identifies when the user actually speaks →

- 3. The Realtime API performs transcription + intent handling →

- 4. Vector search injects domain knowledge →

- 5. A streaming avatar speaks the response in sync with the Realtime output.

Let’s break down how the pieces connect.

Key components:

Backend (Node + Express)

- Issues client secrets for the Realtime WebRTC session

- Generates embeddings for Pinecone queries

- Requests HeyGen access tokens

Browser Client

- Captures microphone audio

- Uses VAD (Voice Activity Detection) to detect speech

- Streams audio into the Realtime session

- Receives streaming transcripts and final responses

- Sends complete responses to the HeyGen avatar to speak

OpenAI Realtime Session

- Transcribes speech

- Executes the agent prompt

- Calls the knowledge_base tool when needed

- Streams text deltas and final responses

HeyGen Avatar

- Displays a photorealistic talking head

- Speaks the assistant’s completed response

- Streams video directly to the browser

The glue between these pieces is a set of tight event loops that respond to speech start/end, text deltas, and avatar playback.

How the Realtime API Fits In

The Realtime API handles two critical tasks:

1. Live Transcription

As raw PCM audio streams in, events like:

fire in real time. These events carry the user’s spoken text, which we surface in the UI and store in the agent’s context.

2. Streaming Responses

The model outputs tokens incrementally:

-

response.output_text.delta→ partial text chunks -

response.output_text.done→ full response finalized

Your code listens for both:

This allows the UI (and avatar) to receive feedback immediately, even before a sentence finishes — an essential detail for natural UX.

How we configure the session

We intentionally set text-only output because HeyGen handles speech synthesis on its own.

Fixing Output Modalities

One tricky part of the Realtime API is getting text-only mode to actually stick. The docs mention output modalities, but they don’t explain that the setting you pass when creating the session doesn’t last. The session quietly resets to audio+text, so the model keeps producing audio even if you disable every TTS feature on your side.

The fix is simple but easy to miss:

you must send a session.update event right after the session is created.

That update locks the modality to ["text"]. Without it, the API keeps sending audio packets and your text-only pipeline behaves strangely, especially when you plug it into something like a HeyGen avatar flow.

Once you add the update call, the Realtime assistant finally stops generating audio and the rest of the system works as expected.

Adding Domain Knowledge with Vector Search

Most real-world assistants need access to private knowledge:

documentation, transcripts, policies, FAQs, or project data.

Here we integrate a Pinecone index via a custom tool:

The agent’s system prompt explicitly instructs:

This means the model will automatically:

- 1. Decide when grounding is needed.

- 2. Retrieve embedding matches

- 3. Incorporate that data into its final, streamed response

This is retrieval-augmented generation without extra orchestration code.

Connecting HeyGen to the Loop

Once the Realtime API finalizes a response, we pass the transcript to the HeyGen Streaming Avatar.

A few important details from the implementation:

1. Avatar session bootstrapping

Your backend issues a token:

Then the browser spins up the avatar:

avatar = new StreamingAvatar({ token }); sessionData = await avatar.createStartAvatar({ quality: AvatarQuality.High, avatarName: AVATAR_CONFIG.avatarName, voice: { voiceId: AVATAR_CONFIG.voiceId }, });

2. Handling streaming video

HeyGen streams a MediaStream; your client assigns it directly:

The result is a live, speaking avatar that feels reactive and believable.

3. Syncing with the response pipeline

The client waits for response.output_text.done or realtimeTranscriptCompletebefore triggering speech so the avatar speaks complete thoughts.

function setupRealtimeListeners() { console.log("Setting up Realtime listeners for HeyGen integration"); // User transcripts window.addEventListener( "userTranscript", ((event: CustomEvent) => { const { transcript } = event.detail; console.log("User transcript event received:", transcript); try { addToChat("user", transcript); } catch (e) { console.warn("Could not add user message to chat:", e); } }) as EventListener ); // Assistant transcript deltas window.addEventListener( "realtimeTranscriptDelta", ((event: CustomEvent) => { const { delta } = event.detail; currentTranscriptBuffer += delta; console.log( "Transcript delta received, buffer now:", currentTranscriptBuffer.substring(0, 50) + "..." ); }) as EventListener ); // Complete assistant transcript window.addEventListener( "realtimeTranscriptComplete", ((event: CustomEvent) => { const { transcript } = event.detail; handleRealtimeTranscript(transcript); }) as EventListener ); // Errors window.addEventListener( "realtimeError", ((event: CustomEvent) => { const { error } = event.detail; console.error("Realtime error received:", error); updateStatus("Error in voice session", "error"); }) as EventListener ); console.log("Realtime listeners setup complete"); }

Partial deltas are still shown in the UI for responsiveness.

Voice Activity Detection for a Natural UX

Instead of recording nonstop, the browser uses MicVAD:

This lets us:

- Avoid flooding the session with silence

- Detect user intent to speak

- Auto-close sessions after inactivity

- Reduce latency and token usage

Users simply talk; the system handles turn-taking automatically.

Putting It All Together: Request Flow

1. User starts a session

Frontend requests /api/session → receives OpenAI client secret → Realtime session connects.

2. Use VAD to prevent false starts

Without VAD, background noise triggers streaming and wastes tokens.

3. Keep the agent prompt simple and declarative

The model follows tool instructions more reliably when the expectations are explicit and unambiguous.

4. Proxy all secrets through a backend

Your /api/session and /api/getToken endpoints ensure no API keys leak to browsers.

5. Pinecone queries should return metadata at minimum

Metadata allows the model to attribute information and avoid hallucination.

Final Thoughts

What makes this stack compelling is how seamlessly the pieces fit together. The Realtime API handles transcription and reasoning, Pinecone grounds responses in private knowledge, and HeyGen transforms text into a lifelike presence. The result feels less like a chatbot and more like a real interactive companion.

If you’re building the next generation of conversational interfaces — products where people speak naturally, get grounded answers, and interact through expressive avatars — this architecture is an excellent starting point.